|

In this tutorial, we’ll explore how to analyze large text datasets with LangChain and Python to find interesting data in anything from books to Wikipedia pages. AI is such a big topic nowadays that OpenAI and libraries like LangChain barely need any introduction. Nevertheless, in case you’ve been lost in an alternate dimension for the past year or so, LangChain, in a nutshell, is a framework for developing applications powered by language models, allowing developers to use the power of LLMs and AI to analyze data and build their own AI apps. Table of ContentsUse CasesBefore getting into all the technicalities, I think it’s nice to look at some use cases of text dataset analysis using LangChain. Here are some examples:

PrerequisitesTo follow along with this article, create a new folder and install LangChain and OpenAI using pip:

File Reading, Text Splitting and Data ExtractionTo analyze large texts, such as books, you need to split the texts into smaller chunks. This is because large texts, such as books, contain hundreds of thousands to millions of tokens, and considering that no LLM can process that many tokens at a time, there’s no way to analyze such texts as a whole without splitting. Also, instead of saving individual prompt outputs for each chunk of a text, it’s more efficient to use a template for extracting data and putting it into a format like JSON or CSV. In this tutorial, I’ll be using JSON. Here is the book that I’m using for this example, which I downloaded for free from Project Gutenberg. This code reads the book Beyond Good and Evil by Friedrich Nietzsche, splits it into chapters, makes a summary of the first chapter, extracts the philosophical messages, ethical theories and moral principles presented in the text, and puts it all into JSON format. As you can see, I used the “gpt-3.5-turbo-1106” model to work with larger contexts of up to 16000 tokens and a 0.3 temperature to give it a bit of creativity. You can experiment with the temperature and see what works best with your use case. Note: the The extracted data gets put into JSON format using

The code then reads the text file containing the book and splits it by chapter. The chain is then given the first chapter of the book as text input:

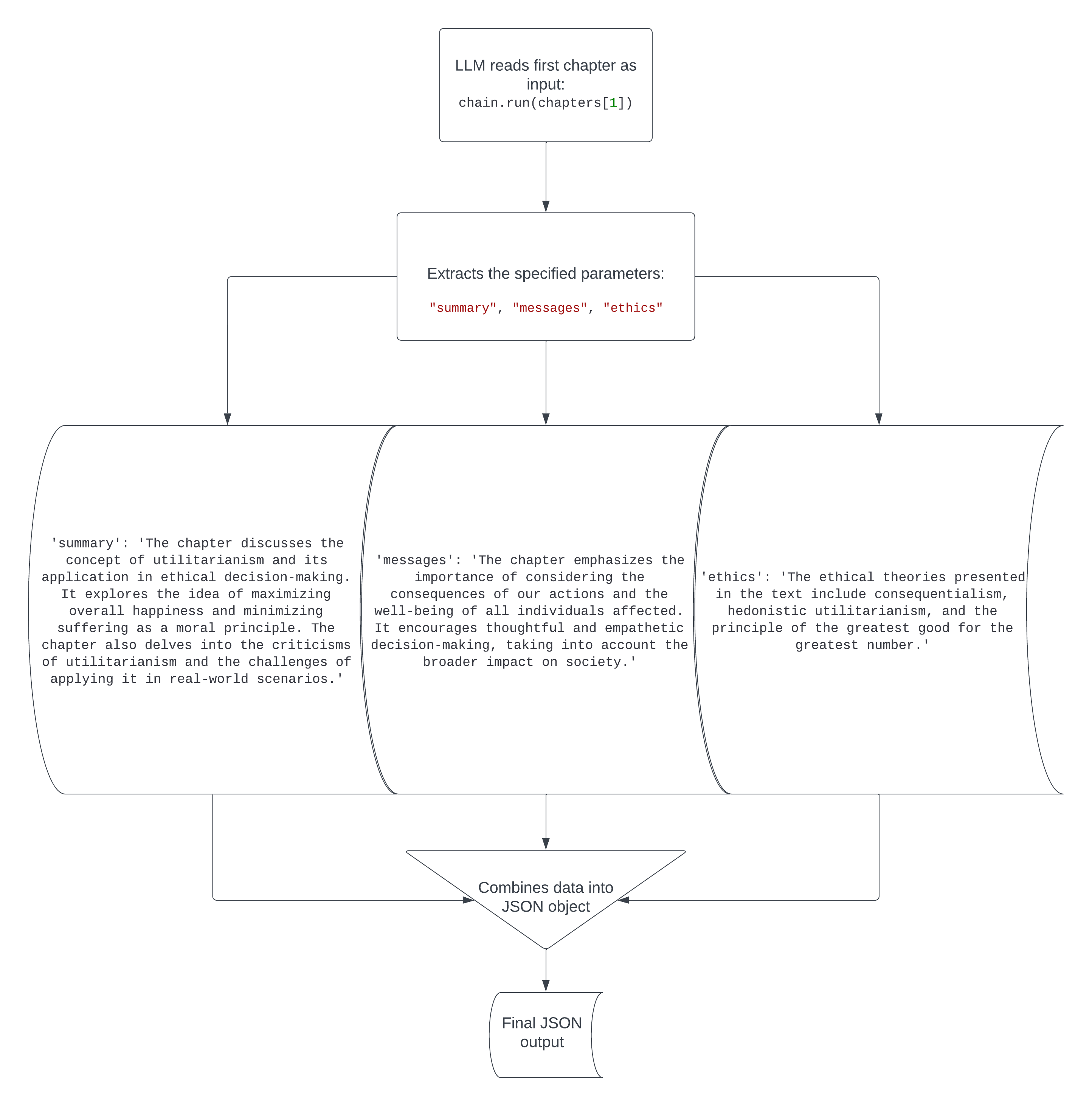

Here’s the output of the code:

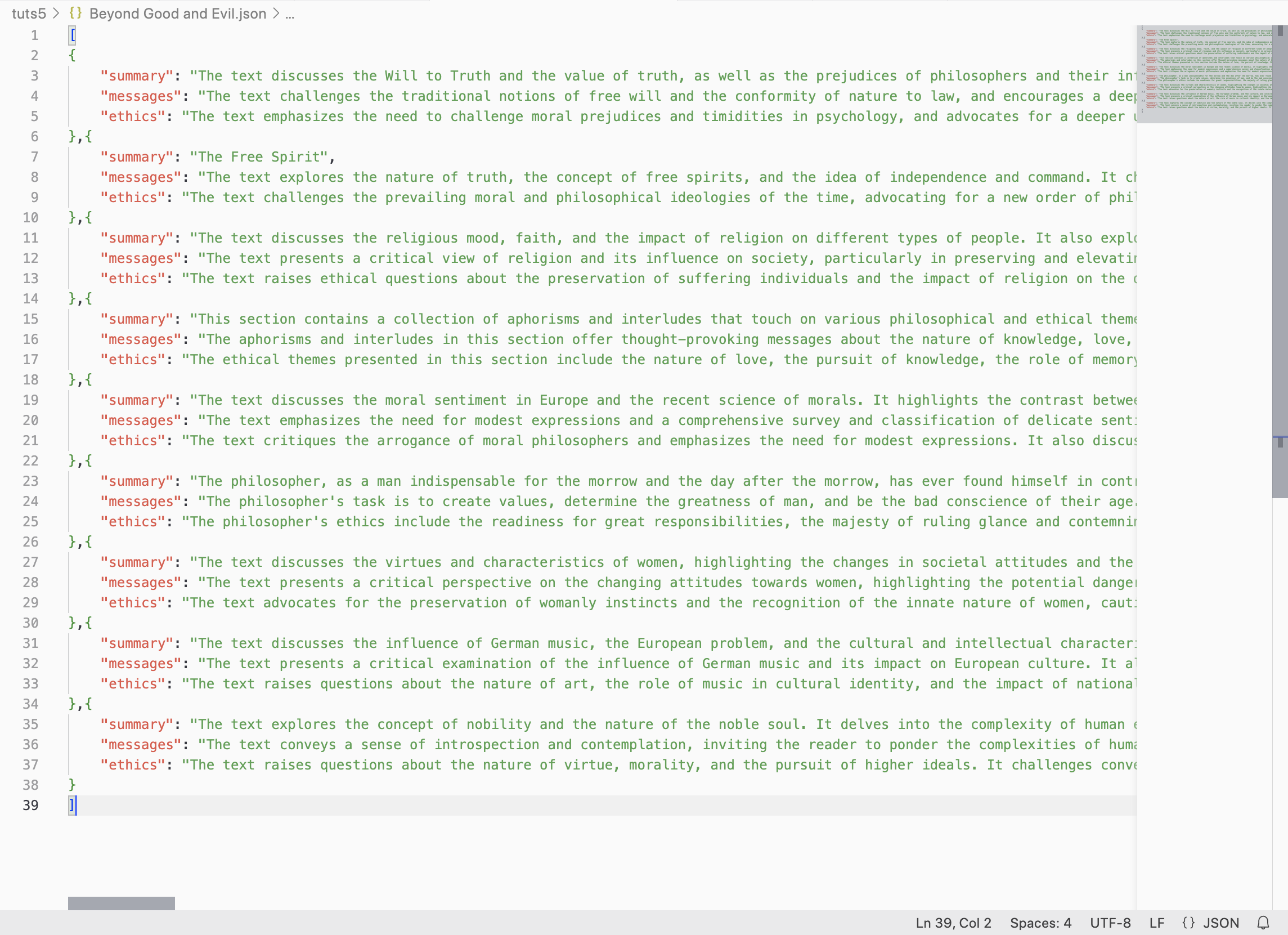

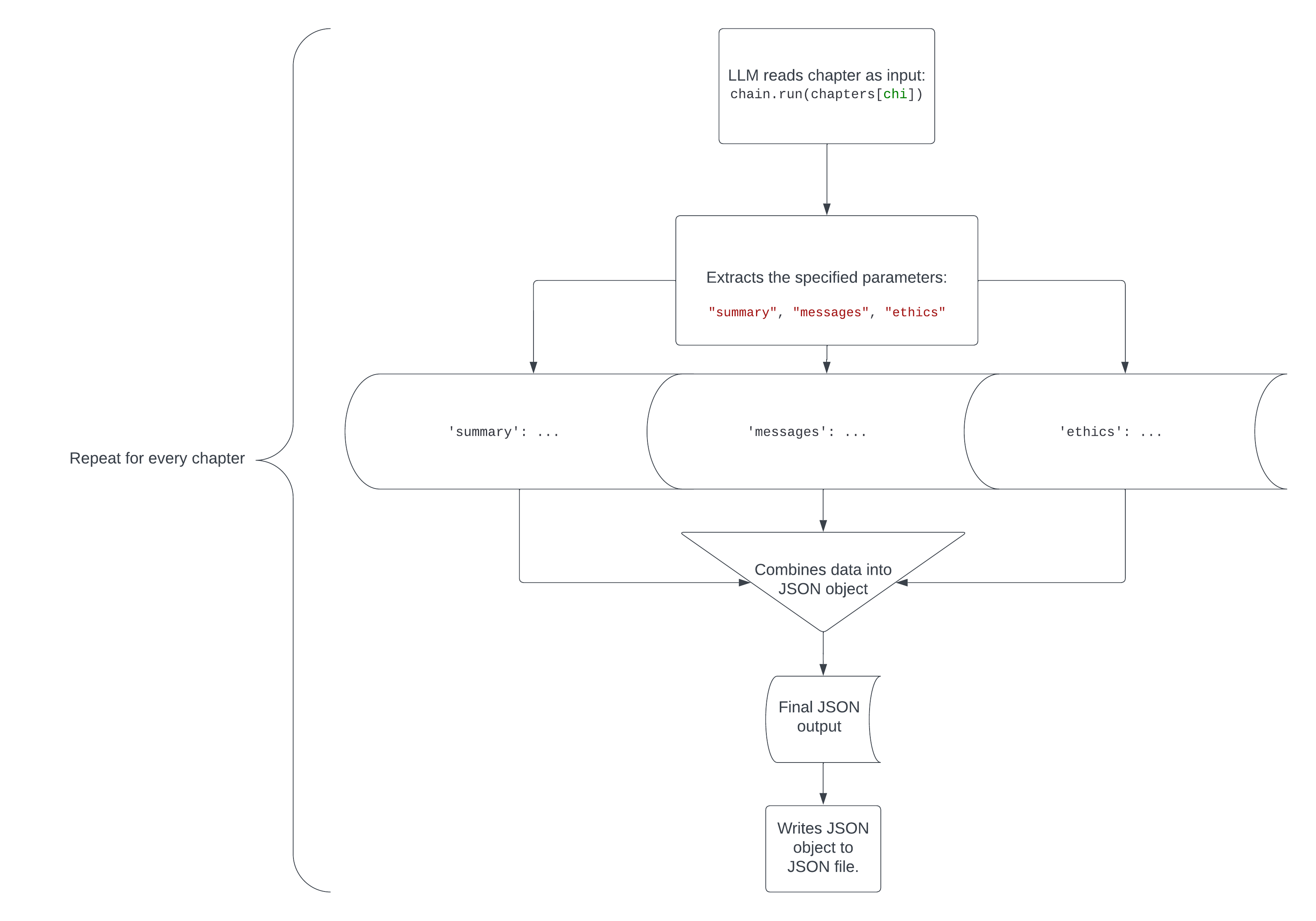

Pretty cool. Philosophical texts written 150 years ago are pretty hard to read and understand, but this code instantly translated the main points from the first chapter into an easy-to-understand report of the chapter’s summary, message and ethical theories/moral principles. The flowchart below will give you a visual representation of what happens in this code. Now you can do the same for all the chapters and put everything into a JSON file using this code. I added This is the part of the code that analyzes every chapter and puts the extracted data for each in a shared JSON file:

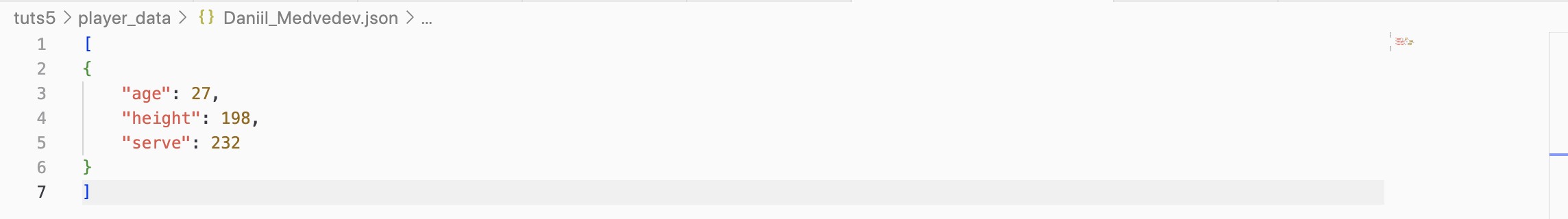

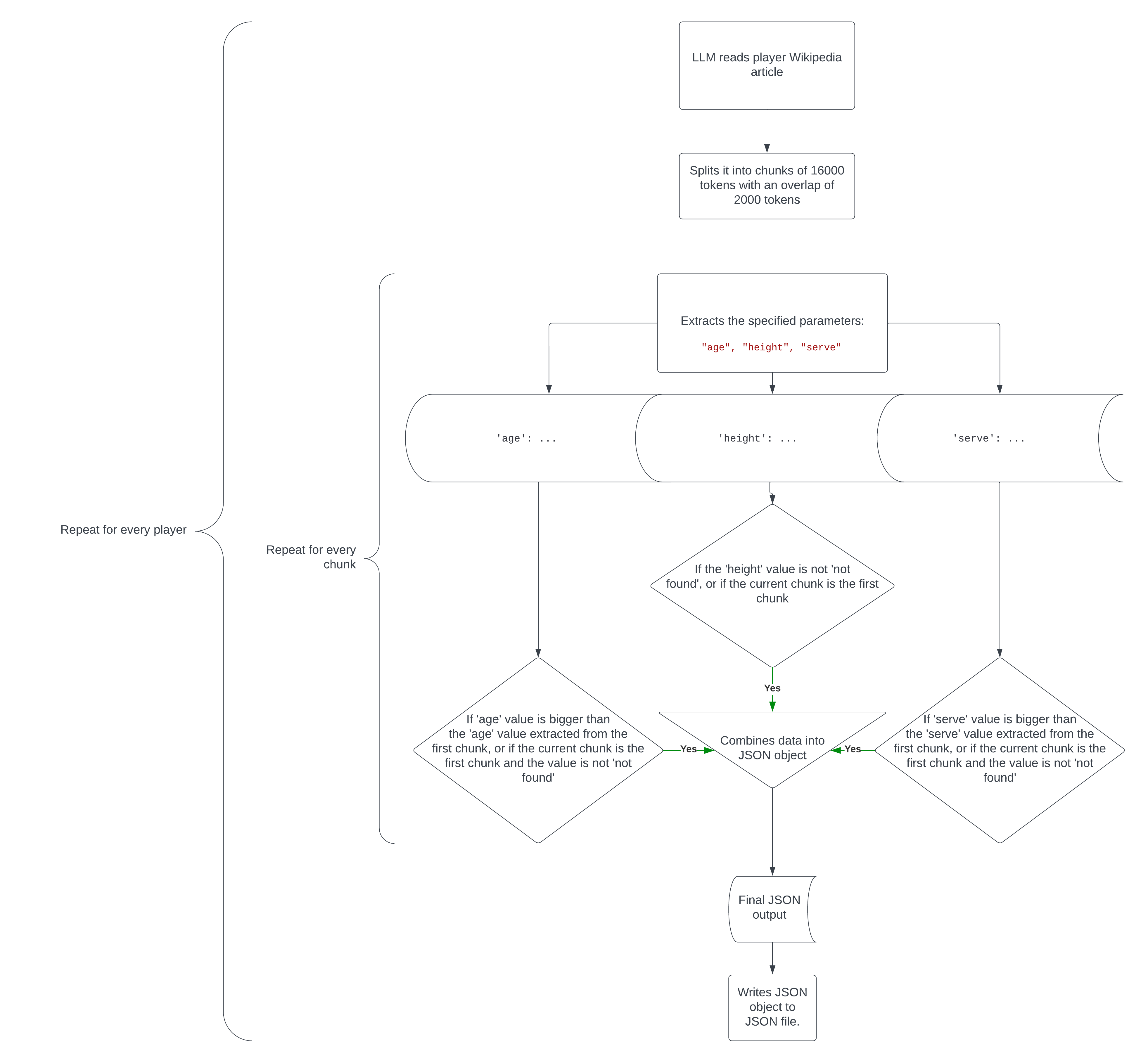

The After the code finishes running, you’ll see that Here’s a visual representation of how this code works. Working With Multiple FilesIf you have dozens of separate files that you’d like to analyze one by one, you can use a script similar to the one you’ve just seen, but instead of iterating through chapters, it will iterate through files in a folder. I’ll use the example of a folder filled with Wikipedia articles on the top 10 ranked tennis players (as of December 3 2023) called Here’s an example of an extracted player data file. However, this code isn’t that simple (I wish it was). To efficiently and reliably extract the most accurate data from texts that are often too big to analyze without chunk splitting, I used this code:

In essence, this code does the following:

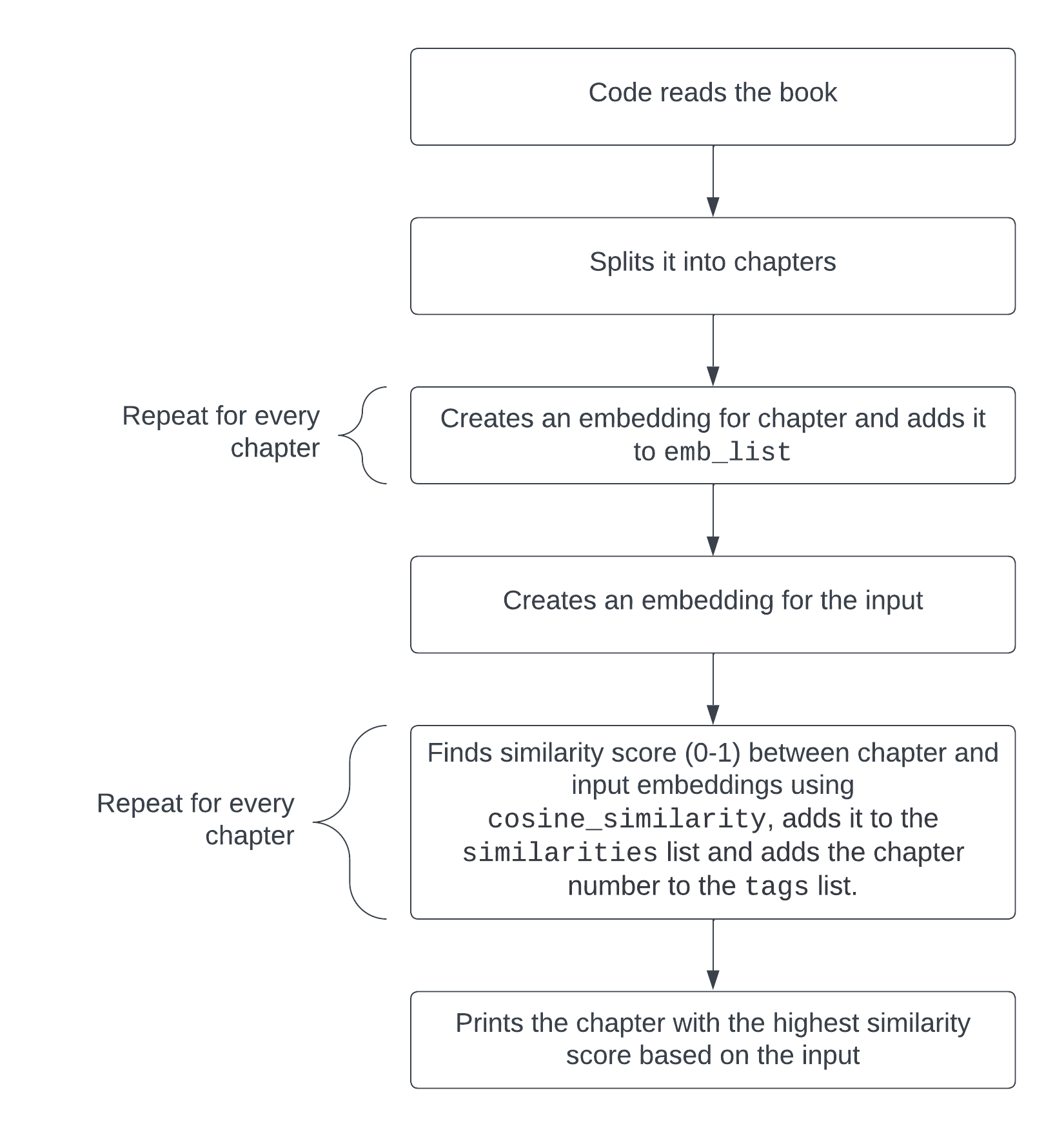

Here’s how the whole code works in a flowchart. Text to EmbeddingsEmbeddings are vector lists that are used to associate pieces of text with each other. A big aspect of text analysis in LangChain is searching large texts for specific chunks that are relevant to a certain input or question. We can go back to the example with the Beyond Good and Evil book by Friedrich Nietzsche and make a simple script that takes a question on the text like “What are the flaws of philosophers?”, turns it into an embedding, splits the book into chapters, turns the different chapters into embeddings and finds the chapter most relevant to the inquiry, suggesting which chapter one should read to find an answer to this question as written by the author. You can find the code to do this here. This code in particular is what searches for the most relevant chapter for a given input or question:

The embeddings similarities between each chapter and the input get put into a list ( Output:

Here’s how this code works visually. Other Application IdeasThere are many other analytical uses for large texts with LangChain and LLMs, and even though they’re too complex to cover in this article in their entirety, I’ll list some of them and outline how they can be achieved in this section. Visualizing topicsYou can, for example, take transcripts of YouTube videos related to AI, like the ones in this dataset, extract the AI related tools mentioned in each video (LangChain, OpenAI, TensorFlow, and so on), compile them into a list, and find the overall most mentioned AI tools, or use a bar graph to visualize the popularity of each one. Analyzing podcast transcriptsYou can take podcast transcripts and, for example, find similarities and differences between the different guests in terms of their opinions and sentiment on a given topic. You can also make an embeddings script (like the one in this article) that searches the podcast transcripts for the most relevant conversations based on an input or question. Analyzing evolutions of news articlesThere are plenty of large news article datasets out there, like this one on BBC news headlines and descriptions and this one on financial news headlines and descriptions. Using such datasets, you can analyze things like sentiment, topics and keywords for each news article. You can then visualize how these aspects of the news articles evolve over time. ConclusionI hope you found this helpful and that you now have an idea of how to analyze large text datasets with LangChain in Python using different methods like embeddings and data extraction. Best of luck in your LangChain projects! via Pixel Lyft https://ift.tt/gOfWRGB

0 Comments

Leave a Reply. |

Top ranked Las Vegas SEO company. Expert SEO services that are affordable, low cost for small business. |